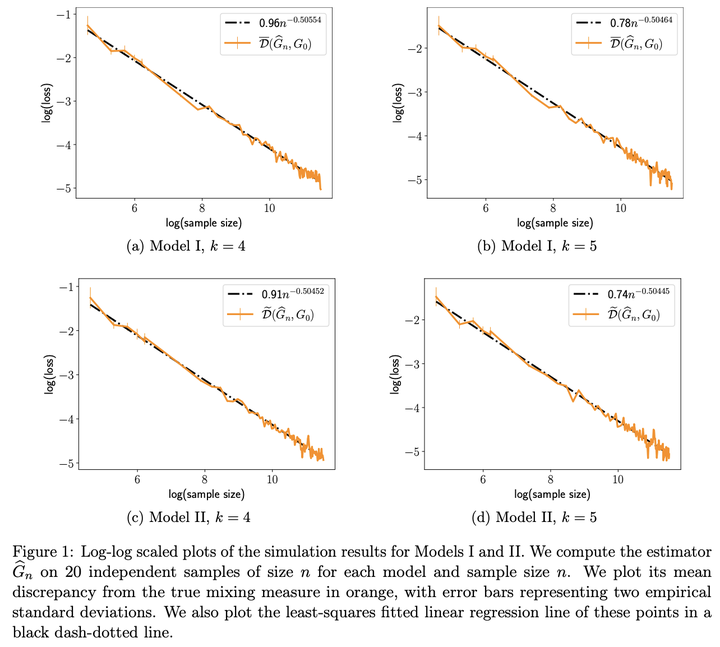

Convergence Rates for Mixtures of Experts

Apr 1, 2023

TrungTin Nguyen

Postdoctoral Research Fellow

A central theme of my research is data science at the intersection of statistical learning, machine learning and optimization.

Previous

Deep Neural Networks

Publications

Risk Bounds for Mixture Density Estimation on Compact Domains via the h-Lifted Kullback--Leibler Divergence

We consider the problem of estimating probability density functions based on sample data, using a finite mixture of densities from some …

A General Theory for Softmax Gating Multinomial Logistic Mixture of Experts

Mixture-of-experts (MoE) model incorporates the power of multiple submodels via gating functions to achieve greater performance in …